How I Built a Full-Stack App in 6 Hours Without Writing Code

What if building software didn't require writing code — just clear thinking?

You Don't Need to Code to Build Real Software

I built a fully functional web application — with user login, a database, a polished interface, and AI-powered conversations — without writing a single line of code myself.

This isn't about no-code platforms or drag-and-drop builders. The app produced 17,948 lines of real, production-grade code across 64 files. I could deploy it today.

The method is called Harness Engineering: you make the decisions, AI writes the code. You're the architect; AI is the builder.

I want to share this story not to show off, but because I believe this changes who gets to build software. You don't need years of coding experience. You need clear thinking, good judgment, and the ability to communicate what you want.

What Is Harness Engineering?

Traditional software development works like this:

Human writes code → Machine runs it

Harness Engineering flips this:

Human defines what to build → AI writes the code → Human reviews and decides

You become the decision-maker. You choose what to build, how it should work, and what trade-offs to accept. The AI handles the mechanical work — writing code, creating tests, generating documentation.

This matters because the hardest part of building software was never typing. It was always knowing what to build and how it should work. That part is still yours. The typing part? AI can handle that now.

Four Principles That Make It Work

1. Clear constraints produce better results

Telling AI "make it work" gets mediocre results. Telling AI "all database queries must go through a repository layer, never called directly from the API" gets excellent results. The clearer your rules, the better the AI performs.

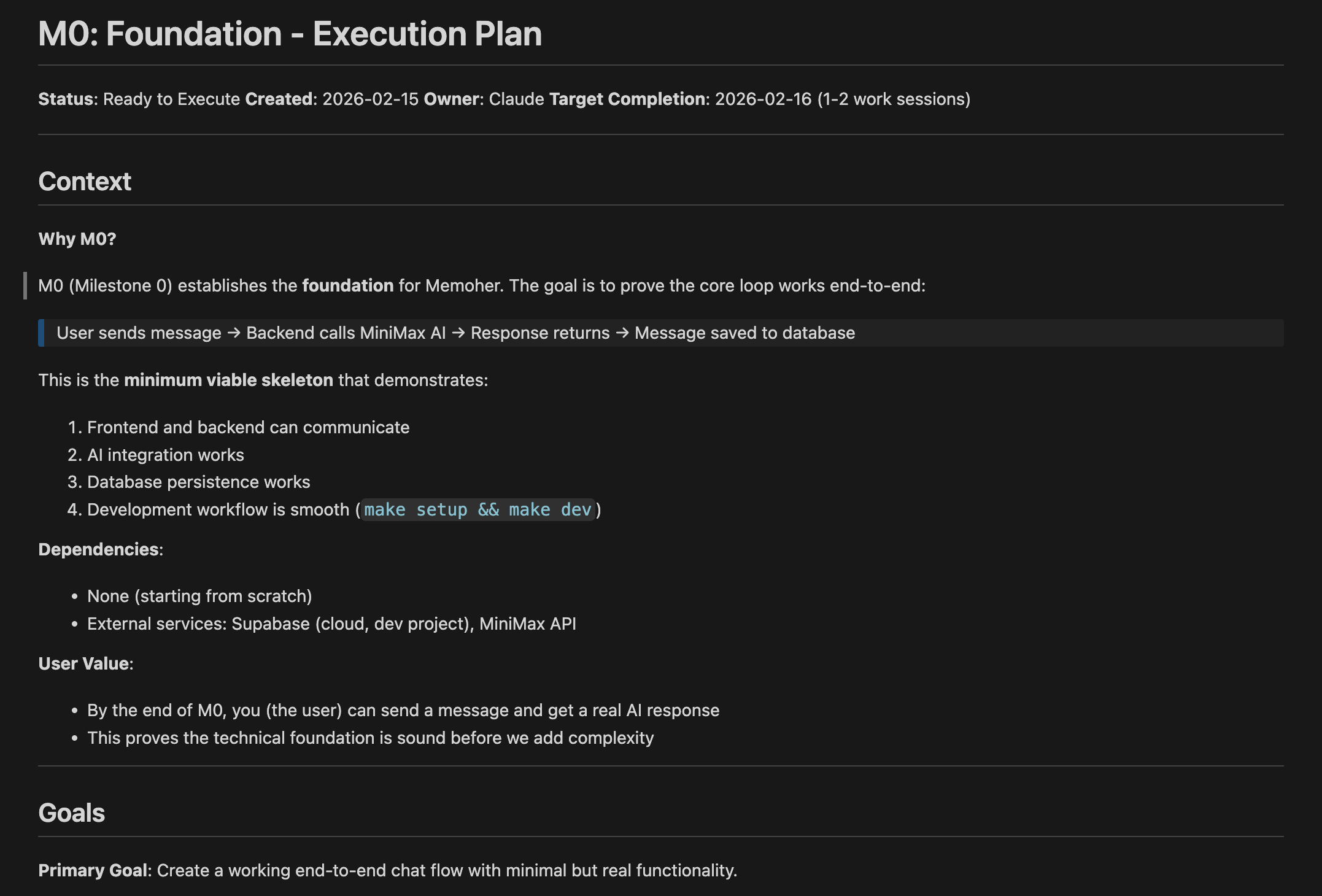

2. Write your plan before any code

I spent the first hour writing documents — not code. An architecture plan, design principles, quality standards. These documents became the AI's instruction manual. Every hour of planning saved five hours of confusion later.

3. Build in small, complete milestones

Don't try to build everything at once. I defined two milestones:

- M0: The simplest version that works end-to-end (send a message, get an AI response, save it)

- M1: Production-ready (real login, conversation history, polished design)

Each milestone is useful on its own. If I stopped after M0, I'd still have a working prototype.

4. Make success measurable

Not "make the UI better" (too vague), but "user can send a message and receive a response within 5 seconds" (testable). Objective criteria let the AI work independently without constant hand-holding.

The Build: From Empty Folder to Working App

What I Built

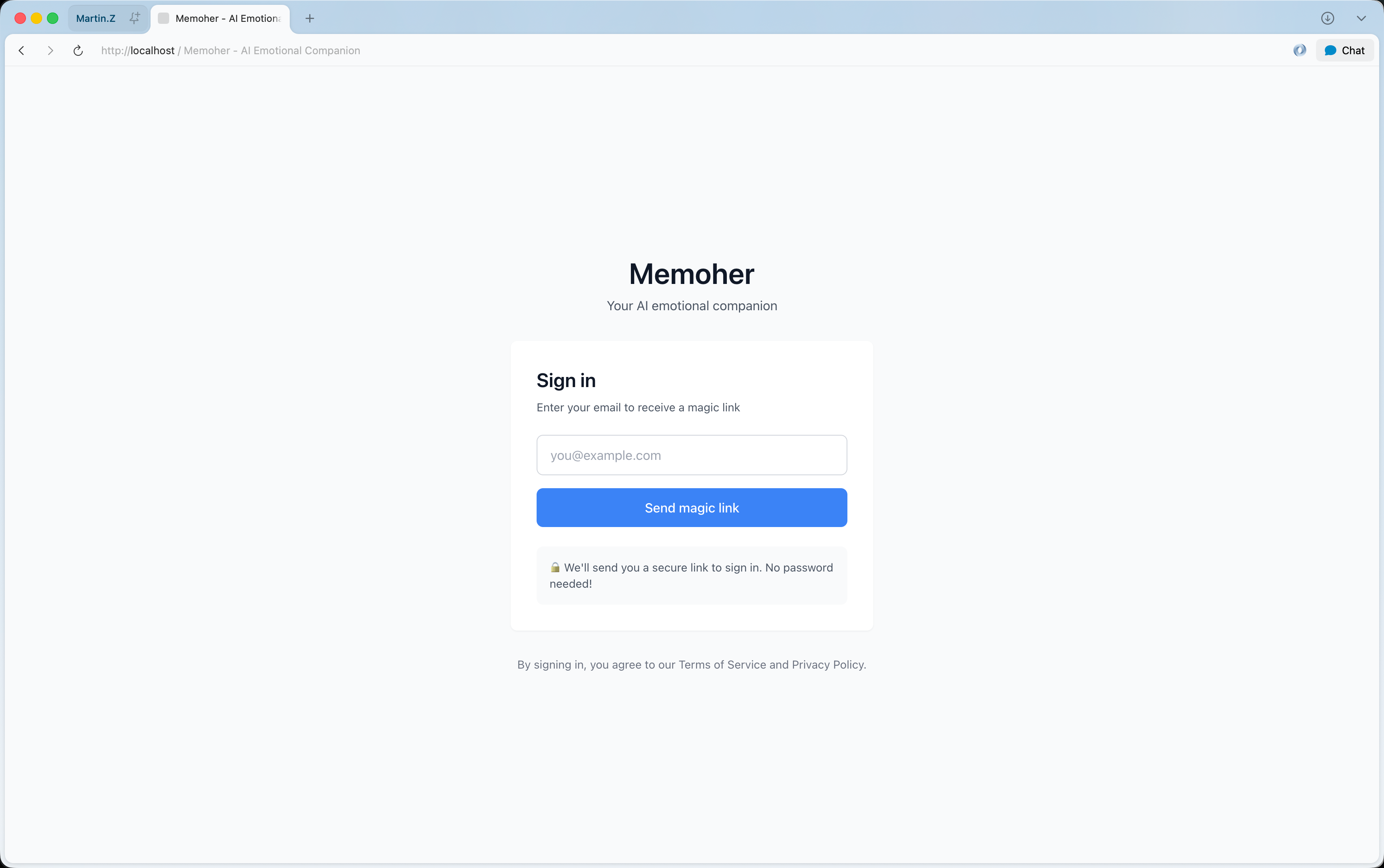

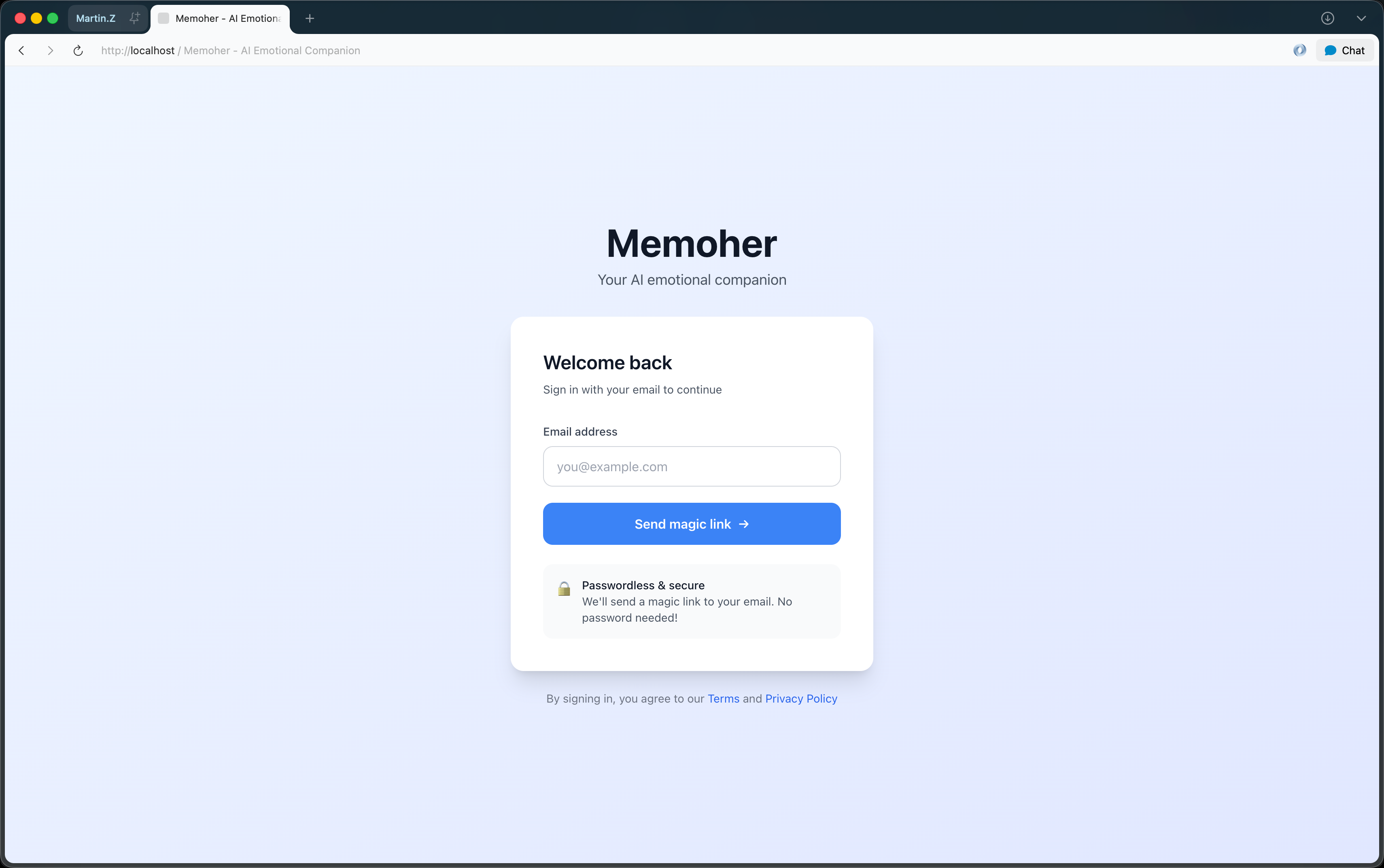

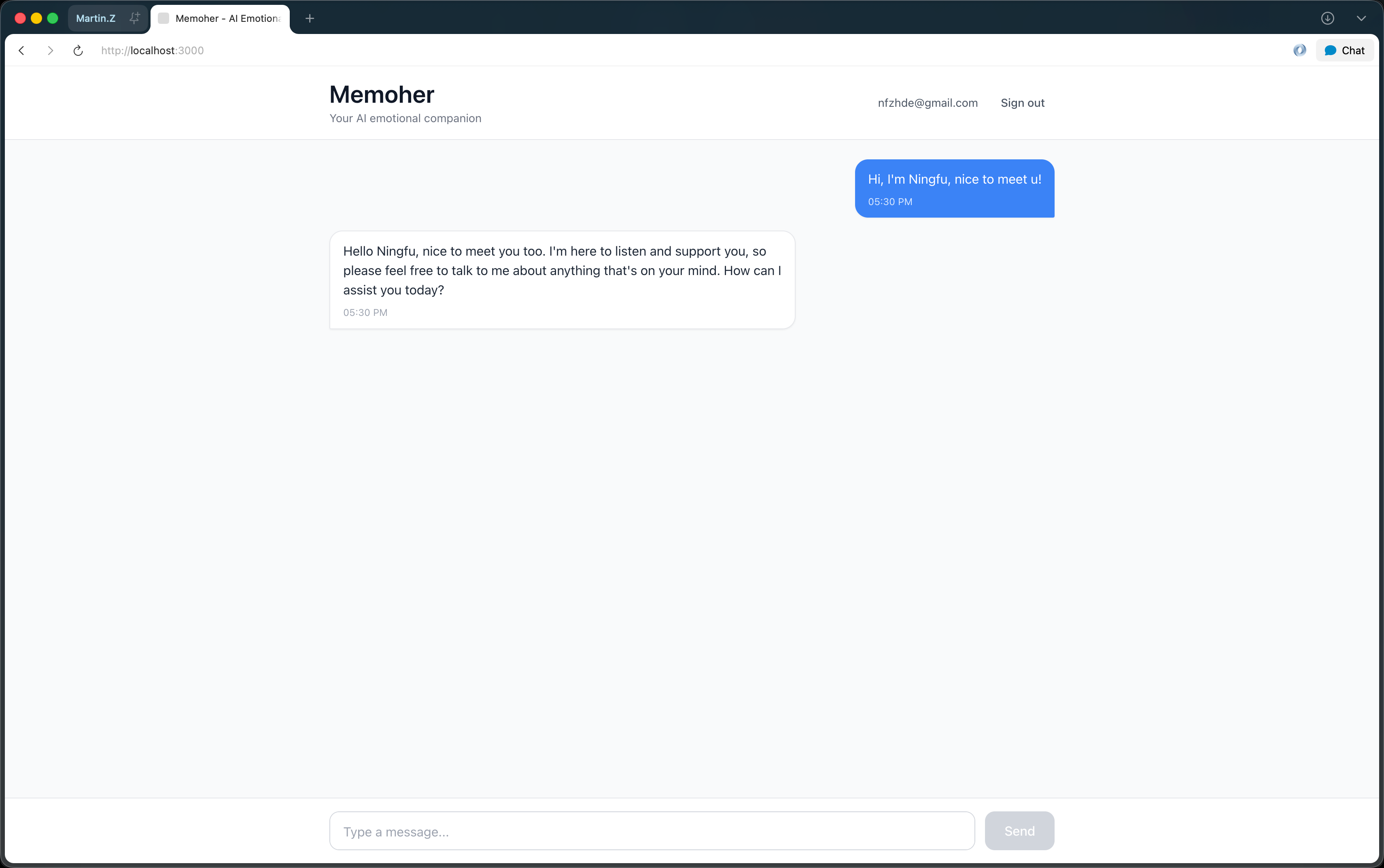

Memoher — an AI emotional companion powered by MiniMax's language model. Users log in, have conversations with an AI that remembers context, and pick up where they left off.

Tech stack (chosen by me, implemented by AI):

- Backend: Python + FastAPI

- Frontend: Next.js + TypeScript

- Database: Supabase (with row-level security)

- Authentication: Magic link emails via Resend

Hour 0–0.5: Planning

I gave Claude Code a detailed brief: what I wanted to build, my role (decision-maker), its role (implementer), and what "done" looks like.

Claude came back with questions — not code. Architecture questions I needed to answer:

| Decision | My Choice | Why |

|---|---|---|

| Authentication in v1? | Skip it, add in M1 | Prove the core concept first |

| Database migrations? | Version-controlled (Supabase CLI) | Never lose track of schema changes |

| Testing strategy? | Backend + frontend | Build quality habits from day one |

These decisions took 30 minutes. They saved hours of back-and-forth later because Claude could work independently.

Hours 0.5–2.5: Building M0 (Core Prototype)

Claude created the entire project structure — backend API, frontend interface, database schema, shared type definitions, and tests.

My job during this phase: review the plan, approve the approach, and test the result.

The first obstacle: The AI integration returned errors. Claude checked the logs, identified a wrong API endpoint, fetched the correct documentation, and fixed it. Total time: 10 minutes.

When I ran the app for the first time and sent a message that came back with a real AI response, saved to the database — that was the proof this method works.

M0 result: ~8,000 lines of code, 45 files, all tests passing. My code contribution: zero lines.

Hours 2.5–6: Building M1 (Production-Ready)

M1 added real authentication, conversation history, database security, and a polished interface.

The workflow stayed the same — Claude proposed a plan with 20 acceptance criteria, I approved it, and Claude implemented phase by phase.

A typical interaction:

Me: "The sign-out button doesn't redirect. It only works after a page refresh."

Claude: analyzes the code — "Missing a redirect after clearing the session. Adding it now."

Two minutes later, fixed.

This pattern repeated dozens of times. Issues that would normally take me 15 minutes to research and fix were resolved in 2–3 minutes.

M1 result: 17,948 total lines of code, 64 files, 20/20 acceptance criteria met. Production-ready. Still zero lines written by me.

What Made This Work (and What I'd Do Differently)

What worked

Upfront documentation was the single biggest force multiplier. I spent about an hour writing an architecture document, design principles, and a quality checklist. These documents meant Claude could work for hours without asking me questions. The AI had clear guardrails, so it made good decisions on its own.

Milestone-driven development kept the project focused. By defining M0 as the smallest useful version, I had a working prototype in 2 hours. That early win built confidence and gave me something to demo — even if I stopped there.

Treating obstacles as collaboration, not failures. When the MiniMax API didn't work, when email sending hit rate limits, when environment variables weren't loading — each time, Claude diagnosed the problem and proposed a fix. I just confirmed the approach. Average resolution: 5–10 minutes.

What I'd tell someone trying this for the first time

Start with something small. Don't build a social network. Build a simple tool, a personal dashboard, or a content site. Learn the workflow before tackling complexity.

Write your constraints down. Before any code, describe what the project should look like. What's the architecture? What are the rules? What does "done" mean? The more specific you are, the better the AI performs.

Review plans, not just results. Ask the AI to show you its plan before it starts coding. Catching a wrong approach early saves hours compared to fixing it after the code is written.

Don't expect perfection on the first try. You'll say things like "that works, but the spacing is off" or "good, but add a loading indicator." This iterative refinement is normal — it's how the process works.

Why This Matters Beyond My Project

I'm sharing this because I believe we're at a turning point. Building software has always been gated by one skill: writing code. That gate is opening.

This doesn't mean coding skills are worthless. Understanding how software works still matters. But the barrier has shifted. The critical skills now are:

- Knowing what to build — understanding a problem well enough to describe a solution

- Making good decisions — choosing between trade-offs with clear reasoning

- Communicating clearly — writing constraints and plans that an AI can follow

- Judging quality — recognizing when something is good enough to ship

These are skills anyone can develop. You don't need a computer science degree. You need practice, curiosity, and clear thinking.

I built a production app in 6 hours. Not because I'm a genius — but because I used a method that lets anyone with clear ideas direct AI to build real things.

The question isn't whether you can build software this way. It's what you'll choose to build.

Resources

- Harness Engineering guide (OpenAI) — the original concept

- Claude Code — the AI tool I used

- Memoher — the app I built

Want practical guides like this delivered to your inbox? Subscribe to my newsletter — I share methods, templates, and case studies on building with AI.